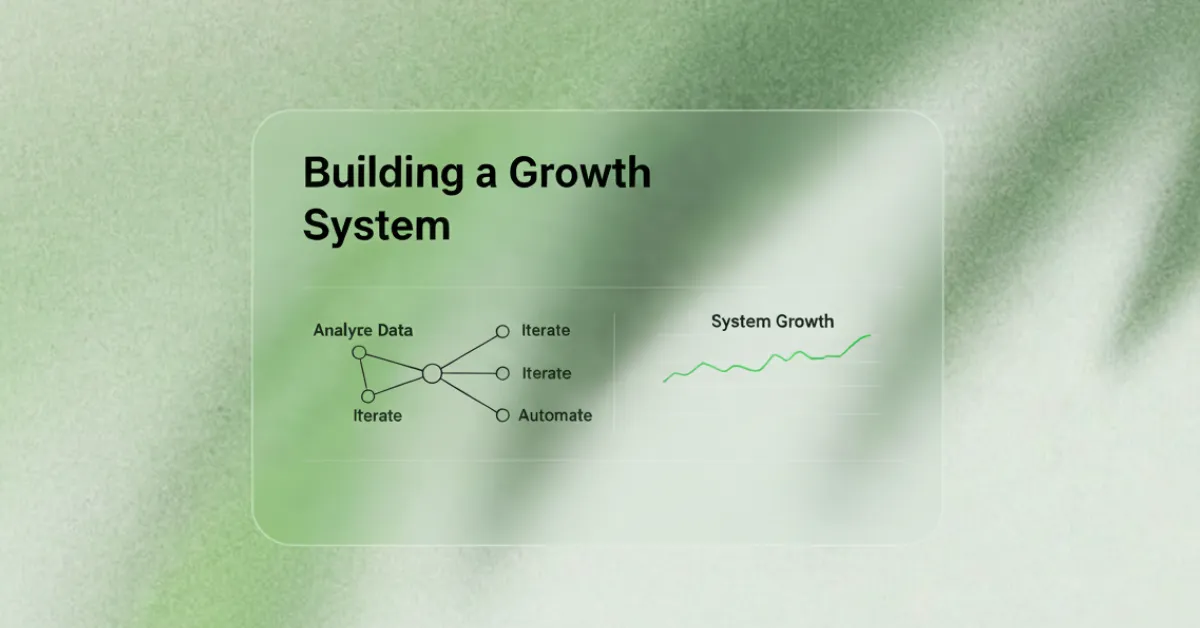

Building a Growth System, Not a Campaign Treadmill

There’s a pattern we notice working with founders who’ve been running paid ads for two or three years. They describe it like this: “We’re spending $15K a month and hitting targets, but it never feels stable. Every winning campaign degrades in weeks. We’re constantly launching new creative to replace what stopped working. I don’t know if we could scale this.”

They’re not describing a marketing problem. They’re describing a systems problem.

They’ve built on campaigns—discrete things with beginnings and endings—instead of systems that learn and compound. Campaigns run out. Creative fatigues. Audiences saturate. What worked last quarter stops working this quarter, and you’re back at zero.

The brands that scale paid acquisition profitably have built infrastructure underneath the campaigns. Systems for learning what works. Processes for turning learnings into better creative. Mechanisms for compounding insights instead of starting from scratch every month.

The Treadmill Pattern

Month 1: Launch a campaign, test creative, scale the winners, revenue increases.

Month 2: The winning creative degrades. CPM rises, CTR drops, conversion falls.

Month 3: You need fresh creative. You scramble to produce new concepts. Some perform, some don’t.

Month 4–6: Repeat. The campaigns working in Month 3 are fatiguing by Month 5.

At no point are you building on what you’ve learned. You’re not understanding why certain creative resonated. You’re not developing a theory of what makes customers convert. You’re just churning through campaigns, hoping each one performs well enough to justify next month’s budget.

After a year or two, most founders are exhausted. They’ve spent hundreds of thousands and generated revenue, but nothing felt sustainable. They’re running to stay in place.

How Systems Work Differently

Campaigns have ends. Systems have feedback loops.

When a campaign stops working, you learn nothing. It just stops. When a system stops working, you understand why, and that understanding improves every future iteration.

A Real Example: Furniture Brand in Malaysia

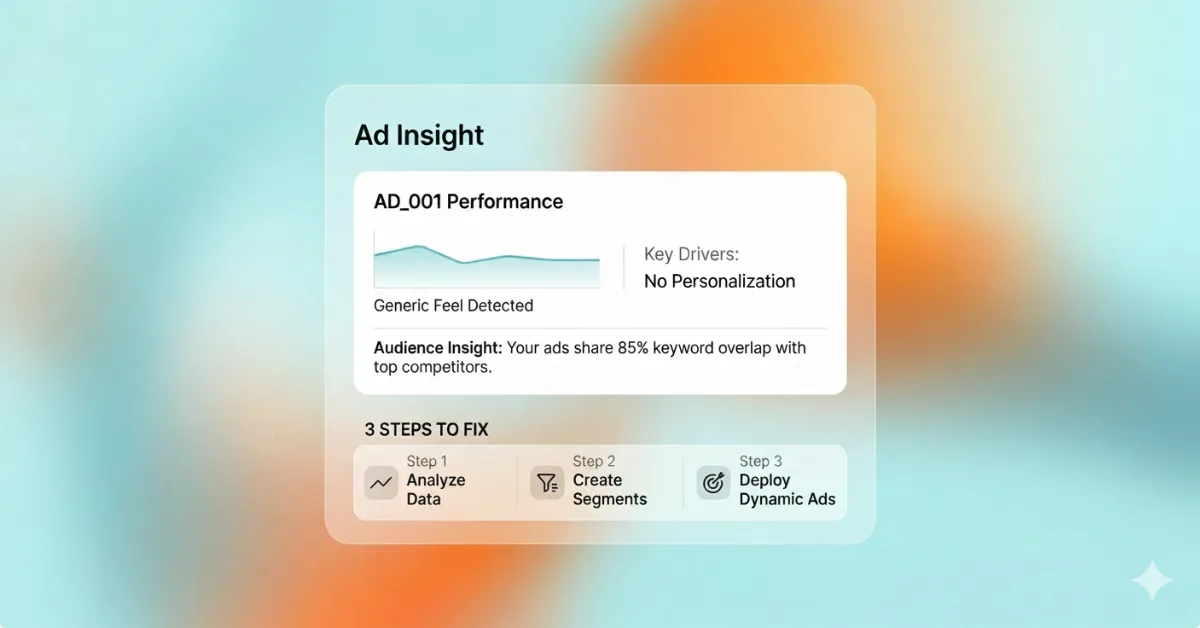

They spent $12K monthly on Meta ads, launching new campaigns every 4–6 weeks. After 18 months, revenue was okay—roughly breaking even on acquisition—but nothing felt predictable.

What we did: We stopped launching anything and understood what they’d already learned without knowing they’d learned it.

We pulled 300+ pieces of creative from the past 18 months and categorized by concept:

- Lifestyle-focused (furniture in beautiful rooms)

- Feature-focused (construction quality)

- Outcome-focused (how furniture solves specific problems)

- Social proof (reviews, testimonials)

- Price-focused (discounts, promotions)

A pattern emerged they’d never seen: outcome-focused creative consistently outperformed everything else. Ads showing specific furniture solving specific problems—a small table for apartments, storage for families with kids, desk setups for remote work—converted 3–4x better than lifestyle photography.

They’d never synthesized this learning, so they kept producing all five types in equal proportions.

We did the same with audiences. Over 18 months, they’d tested dozens of targeting approaches. Two types emerged as clear winners:

- Lookalikes built from highest-LTV customers

- Interest targeting around life transitions (moving, new marriage, first child)

Broad interest targeting and generic lookalikes performed 40–50% worse on every metric. They’d just never systematized it.

What changed: We rebuilt their acquisition around outcome-focused creative and high-LTV audiences. Instead of random new concepts monthly, we built a framework for testing different executions of the same winning pattern. We focused 80% of budget on the two audience types that worked, using 20% to test refinements.

Most importantly, we built a process: every month, analyze which outcome-focused messages resonated most, document why they worked, use those insights to inform next month’s creative.

Results: Within three months, CAC dropped 42% while maintaining revenue. By month 6, they’d scaled from $12K to $28K monthly without increasing CAC. Performance became predictable. They could forecast what new creative would perform based on whether it followed the patterns we’d identified.

The breakthrough wasn’t finding one winning campaign. It was building infrastructure that could generate winning campaigns consistently.

What Actually Separates Systems from Treadmills

Documented patterns over individual results

Most brands track campaign performance: “Campaign A got 2.3% CTR, Campaign B got 4.1%.” They don’t track concept performance: “Outcome-focused creative consistently outperforms lifestyle creative by 3x.”

Patterns generalize. If you know outcome-focused creative works, you can make many different outcome-focused ads and expect them to perform reasonably. If you only know Campaign B worked, you have no idea what to make next.

Continuous testing within proven frameworks

A treadmill approach treats every test as equally uncertain. A systems approach tests within frameworks you’ve already validated. You know outcome-focused creative works, so now you’re testing different ways to express outcomes. You know high-LTV lookalikes convert better, so now you’re testing refinements.

This compounds learning. Each test makes the framework slightly better instead of trying to discover an entirely new framework from scratch.

Performance metrics connected to business outcomes

Campaign treadmills optimize for campaign metrics: CTR, CPM, conversion rate. Growth systems optimize for what actually drives the business: CAC relative to LTV, payback period, contribution margin after acquisition costs, repeat purchase rate.

You might have a campaign with great conversion rates that attracts low-LTV customers who never buy again. Campaign-level metrics call this a success. Business-level metrics call it a drain.

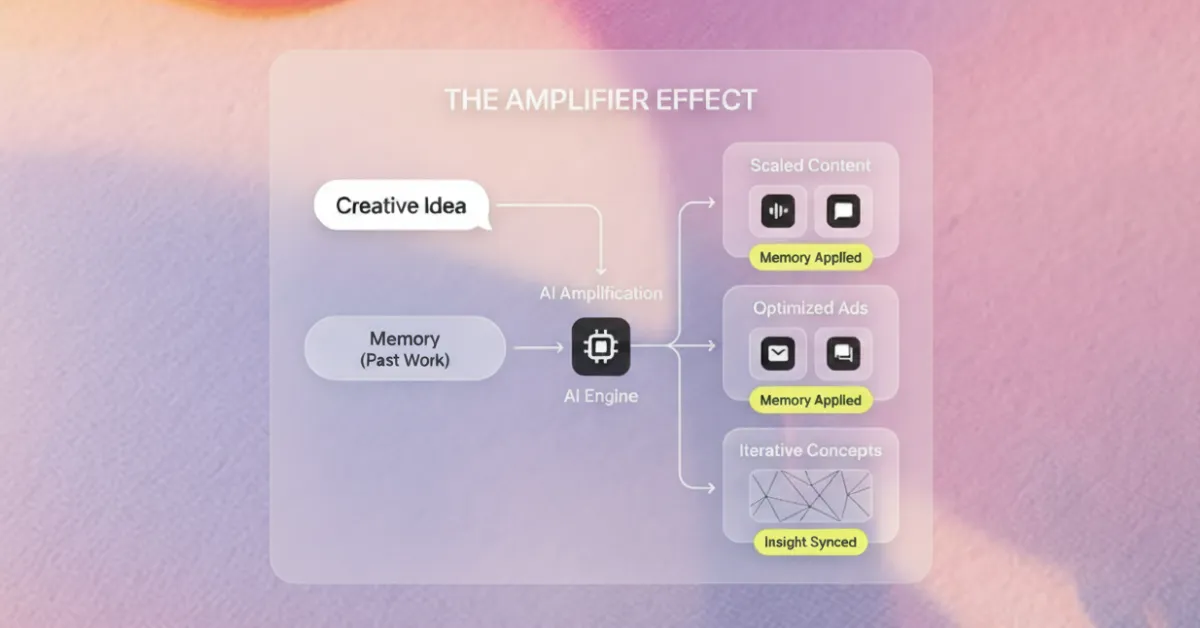

Infrastructure for institutional memory

The treadmill’s defining characteristic is that nothing accumulates. Last quarter’s learnings don’t inform this quarter’s decisions because there’s no process for capturing them.

Growth systems create institutional memory—ways for your team (and future hires) to understand what you’ve learned without re-learning it through expensive testing.

After every significant test, spend 30 minutes documenting: What were we testing? What did we learn? What does this suggest we should do differently? What questions did this raise that we should test next?

This practice alone—regularly documented learning—is the difference between campaigns that end and systems that improve.

The Transition Path

Phase 1: Stop and Synthesize (Month 1)

Don’t launch new campaigns. Understand what you’ve already learned. Pull every campaign from the past 6–12 months. Categorize by concept, not just performance. Look for patterns.

What types of creative consistently perform better? What audiences deliver better quality customers? Document these patterns. This is your foundation.

Phase 2: Build Your Framework (Month 2)

Based on the patterns you identified, create a testing framework. Define 2–3 creative concepts that consistently work and commit to exploring variations. Define 2–3 audience types that deliver the best business outcomes and focus the majority of your budget there.

You’re still testing, but testing within a framework informed by what you’ve learned.

Phase 3: Establish Learning Loops (Month 3+)

Build processes for continuous improvement. Weekly: review performance, identify what’s working. Monthly: analyze concept-level patterns, document insights, plan next month’s tests. Quarterly: synthesize everything you’ve learned, update your framework, identify capability gaps.

Phase 4: Scale What Compounds (Month 6+)

Once you have systems that generate predictable results, you can scale with confidence. You’re not just adding budget. You’re scaling a system that has demonstrated it can consistently generate profitable customers.

Why This Matters Now

The paid ads landscape keeps getting more competitive. CPMs rise. Audiences fragment. Attribution becomes more complex. The brands that survive aren’t the ones with the biggest budgets. They’re the ones with the best systems.

If you’re still on the campaign treadmill, every month gets harder. You’re competing against companies that have accumulated years of learning, that understand their customers more deeply, that can produce effective creative more efficiently because they’ve systematized what works.

Building systems takes longer upfront. You have to resist launching the next campaign and hoping it works. You have to invest time in understanding patterns, documenting learnings, building processes.

But systems compound. Every month, your decision-making improves. Every test adds to your knowledge base. Every campaign builds on what came before instead of starting from scratch.

Eventually what felt like slower progress becomes faster progress, because you’re not running just to stay in place anymore. You’re actually moving forward.

Keep reading

View more → Strategy

Strategy Creative

Creative Growth

Growth AI

AI Creative

Creative